AI

Understanding the AI Stack

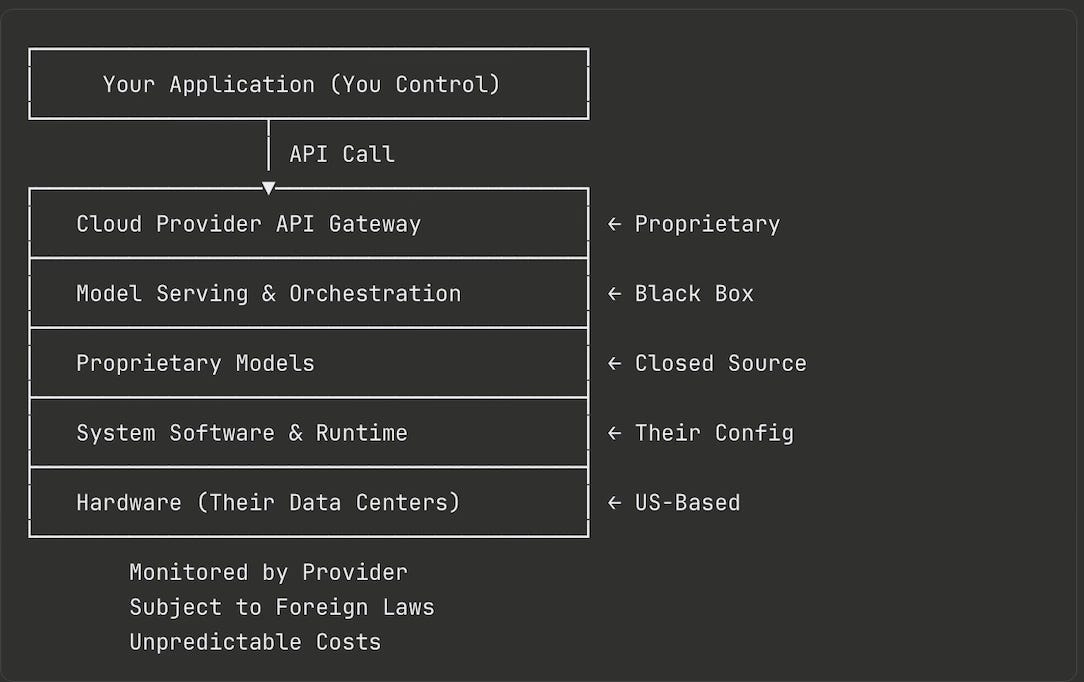

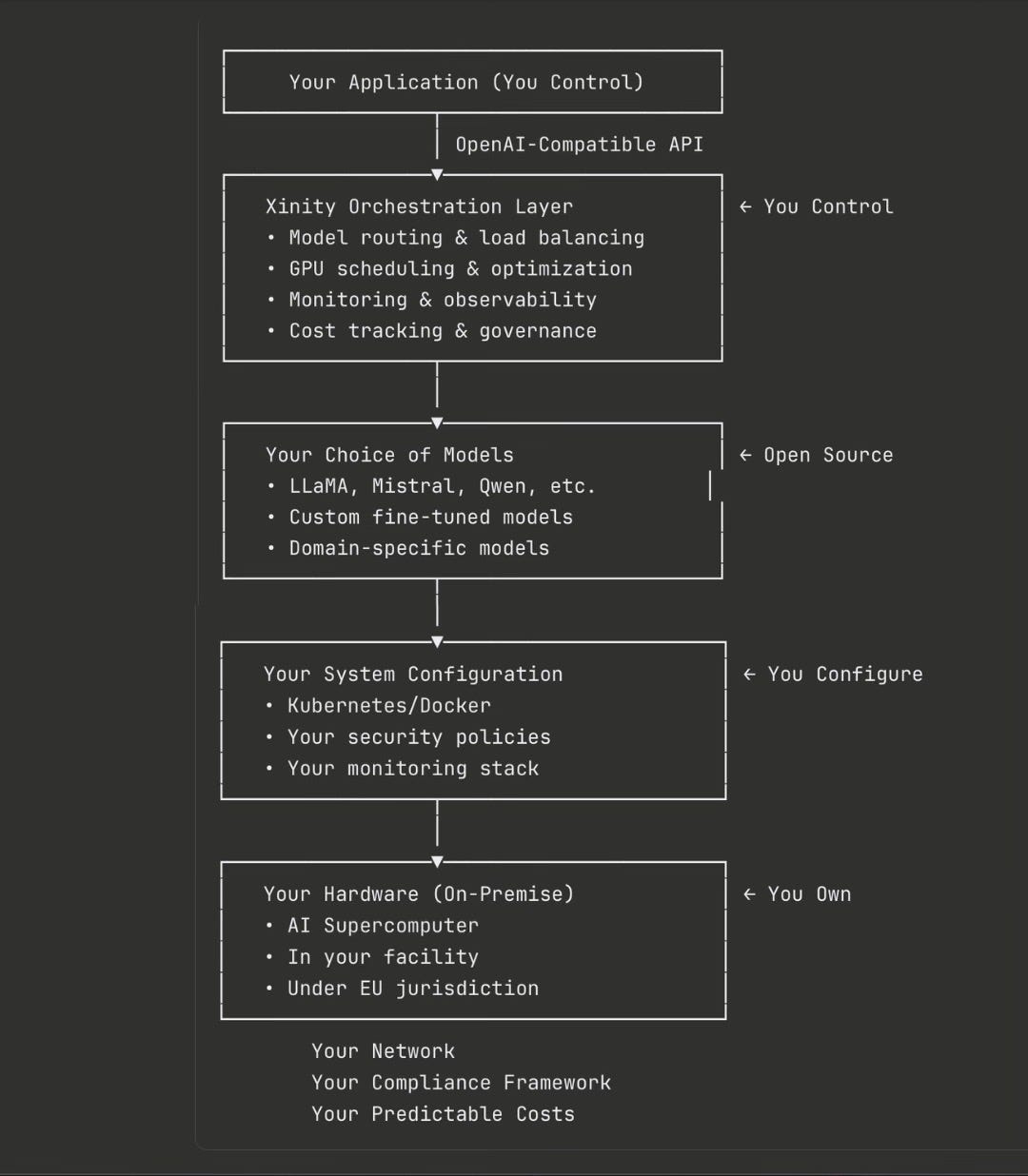

Most organizations adopting AI don’t realize they’re building on a complex stack of technologies—each layer representing a dependency, a cost center, and a control point. Understanding these layers reveals what you’re truly dependent on, and where Xinity provides sovereignty.

The Five Layers of the AI Stack

Layer 1: Hardware Infrastructure

What it is: Physical computing resources powering AI workloads—GPUs for parallel processing, AI accelerators, high-performance CPUs, memory, storage, and networking infrastructure.

Traditional control: Cloud providers own and operate massive data centers. Your workloads run on their hardware, in their facilities, under their physical control.

The dependency: You rent computing time but never own the infrastructure. Costs scale indefinitely with usage. You’re subject to availability, pricing changes, and service terms. Physical processing location is outside your control.

Layer 2: System Software & Runtime

What it is: Operating systems, container orchestration (Docker, Kubernetes), GPU drivers, CUDA libraries, resource scheduling, and security layers that manage hardware resources.

Traditional control: Cloud providers configure and maintain these systems. You get limited access through APIs. Updates, patches, and configurations are managed externally.

The dependency: Limited visibility into system configuration. Cannot customize low-level optimizations. Dependent on provider’s security practices and system-level compliance.

Layer 3: AI Model Serving & Orchestration

What it is: The infrastructure layer that loads models, handles requests, and manages inference—including model initialization, request routing, GPU allocation, caching, optimization, API gateways, and monitoring.

Traditional control: Cloud AI providers package this as a black box. You send requests, they return results. No visibility into how models are loaded, cached, or optimized.

The dependency: Cannot optimize for your specific workload patterns. No custom routing logic. Limited to provider’s supported models. Opaque performance characteristics.

🎯 This Is Where Xinity Operates

Xinity provides the orchestration layer between your applications and AI models, giving you:

Complete control over model serving infrastructure

Intelligent routing across multiple models and GPUs

Custom optimization for your workload patterns

Full visibility into performance and resource usage

OpenAI-compatible APIs for seamless integration

Layer 4: AI Models

What it is: The actual neural networks—foundation models (LLMs, vision models), fine-tuned models, specialized domain models, embeddings, and multi-modal models.

Traditional control: Cloud providers offer access to proprietary models you can use but don’t own. Model weights and architectures are often closed. Fine-tuning may be limited or expensive.

The dependency: Cannot inspect model internals. Limited customization. Model updates on provider’s schedule. Pricing tied to model sophistication.

How Xinity addresses this:

Deploy any open-source model you choose

Run custom fine-tuned models on your data

Full ownership of model weights and training data

Freedom to experiment with cutting-edge open models

No restrictions on modification or specialization

Layer 5: Applications & Interfaces

What it is: User-facing applications and APIs consuming AI capabilities—chat interfaces, document processing, code generation, customer service bots, analysis tools, and business-specific applications.

Traditional control: You build the application, but it’s tightly coupled to specific cloud AI APIs. Switching providers means rewriting code.

How Xinity addresses this:

OpenAI-compatible API means your applications work unchanged

Drop-in replacement by changing base URL and API key

No vendor lock-in at the application layer

Freedom to switch or multi-source models

The Stack Comparison

Traditional Cloud AI: Hidden Dependencies

You control: Your application code

You don’t control: Everything else—monitoring, jurisdiction, costs, configuration

Xinity Sovereign AI Stack: Full Control

You control: Everything from hardware up

What Xinity provides: The intelligent orchestration layer that makes it all work seamlessly

The Problem Xinity Solves

The False Choice

Most organizations face two bad options:

Option A: Cloud AI Services

✅ Easy to use with simple APIs

✅ No infrastructure management

❌ Data leaves your control

❌ Unpredictable, scaling costs

❌ Vendor lock-in

❌ Subject to foreign jurisdiction

Option B: Build Everything Yourself

✅ Full control over infrastructure

✅ Data stays on-premise

❌ Requires 12+ months of development

❌ Need deep ML engineering expertise

❌ Complex model serving infrastructure

❌ Ongoing maintenance burden

Xinity: The Third Way

✅ Cloud-like simplicity with OpenAI-compatible APIs

✅ Full data sovereignty on your hardware

✅ Production-ready in days, not months

✅ No ML engineering expertise required

✅ Predictable infrastructure-based costs

✅ European jurisdiction and GDPR compliance

What Makes Xinity’s Orchestration Essential

1. Intelligent Model Routing

Not all queries need your most powerful model. Xinity routes requests based on query complexity, required quality, available GPU resources, cost constraints, and latency requirements.

Real impact: Simple factual queries route to smaller, faster models while complex reasoning tasks use your most capable model—optimizing both performance and cost.

2. GPU Resource Optimization

GPUs are expensive. Xinity maximizes value through on-demand model loading, request batching, workload-based allocation, and prevention of resource contention.

Real impact: Run 3-5x more inference throughput on the same hardware compared to naive implementations.

3. Production-Grade Reliability

Enterprise AI needs enterprise reliability: automatic failover, health monitoring with automatic recovery, request queuing during high load, and graceful degradation strategies.

Real impact: Your applications stay responsive even when individual components fail.

4. Observability & Governance

Comprehensive visibility with request-level logging and tracing, cost attribution by team or project, performance metrics and bottleneck identification, and audit trails for compliance.

Real impact: Know exactly where AI resources are going and prove compliance to auditors.

5. Zero-Friction Migration

OpenAI-compatible API means changing two lines of code (base URL and API key), keeping existing prompt engineering, maintaining current workflows, and requiring no team training.

Real impact: Migrate production applications in hours, not months.

Use Cases: Where Xinity Matters Most

Multi-Team Enterprises

Challenge: Different teams using AI independently, no cost visibility, resource conflicts

Xinity Solution: Centralized control plane, per-team quotas and policies, shared GPU infrastructure with fair scheduling, unified billing and cost allocation

Regulated Industries

Challenge: Need AI capabilities but cannot send data to cloud providers due to compliance

Xinity Solution: Complete data residency on your hardware, full audit trails, compliance-ready (GDPR, sector-specific regulations), explainable routing and decision-making

Cost-Sensitive Deployments

Challenge: Cloud AI costs spiraling as usage grows, need cost predictability

Xinity Solution: Infrastructure-based pricing (not per-token), intelligent routing to minimize compute, complete cost visibility, 70-90% cost reduction vs. cloud

Custom Model Deployments

Challenge: Need to run fine-tuned or domain-specific models unavailable from cloud providers

Xinity Solution: Deploy any open-source model, run custom fine-tuned models, A/B test multiple versions, gradual rollout of updates

Why the Orchestration Layer Matters

Running AI models on your own hardware isn’t enough for sovereign AI. You need:

An intelligent layer that makes optimal use of your resources

Production-grade reliability that enterprises require

Developer-friendly APIs that enable fast migration

Observability and governance for compliance and optimization

This orchestration layer is complex to build—it took cloud providers years to develop theirs. Xinity brings that same sophistication to on-premise deployments, making sovereign AI practical for organizations that need control without complexity.

Xinity doesn’t just give you infrastructure. It gives you the missing piece that makes sovereign AI actually work in production.

Ready to See Xinity in Action?

Learn how Xinity can bring cloud-like AI capabilities to your on-premise infrastructure.

YOUR AI. YOUR SERVERS.

Ready to Run any AI on Your Own Terms?

No commitment. 30 minutes. We'll show you exactly what deployment looks like for your company.

Use Link

Company

Am Gestade 5/2

1010 Vienna, Austria

© 2026 Xinity

YOUR AI. YOUR SERVERS.

Ready to Run any AI on Your Own Terms?

No commitment. 30 minutes. We'll show you exactly what deployment looks like for your company.

Use Link

Company

Am Gestade 5/2

1010 Vienna, Austria

© 2026 Xinity

YOUR AI. YOUR SERVERS.

Ready to Run any AI on Your Own Terms?

No commitment. 30 minutes. We'll show you exactly what deployment looks like for your company.

Use Link

Company

Am Gestade 5/2

1010 Vienna, Austria